Claude Code daha az token yakması için /ask tool

The Details #218

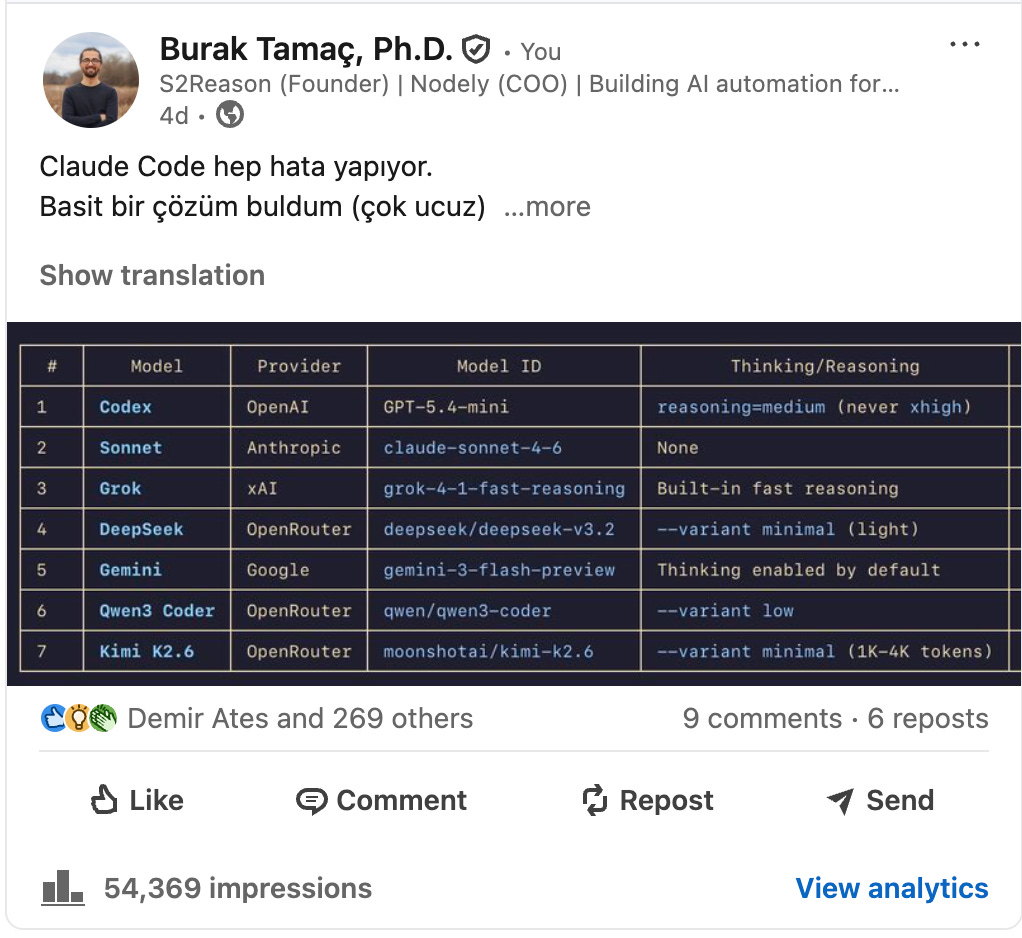

Aşağıdaki post ile alakalı ‘nasıl yapacağız’ sorusu çok fazla geldi.

Claude’a bir döküman hazırlattım (👇). Copy-paste ile Claude Code’a verin. O sizi bazı sitelerden API almak için yönelendirecek. Sonra kullanıma hazır hale gelecek.

⚠️ Ama şunu unutmayın:

Kendinize özel hale getirin.

Benim kullanma biçimim size gereksiz gelebilir. Bunun için de Claude’a sorun ‘bu tool benim için faydalı mı, değilse nasıl faydalı hale getirir, birlikte tartışalım’ deyin.

Bunu yazmazsanız muhtemelen gereksiz ve işinize yaramayan bir tool olacak.

Düşünmeden yaptığımız her aksiyonun faturasını fazla token yakarak ödüyoruz.

Adına neden ‘ask’ koydum?

Çünkü yazması kolay oluyor. Telefondan / kullanınca menü çıkmadığı için /ask yazmak çok basit. Elbette değiştirebilirsiniz.

Problem çıkınca başka bir session açarak Claude a problemi çözemesini söyleyin.

# /ask Tool Setup Guide

This document is everything you need to set up the `/ask` slash command in Claude Code. It runs 6 AI models in parallel (Codex, Opus 4.7, Grok, DeepSeek V4-Flash, Gemini, Kimi K2-0905) to review your code before you implement anything.

---

## What it does

When you type `/ask` in Claude Code, it:

1. Sends your question to 6 different AI models simultaneously

2. Each model reads your project files directly from disk using its own tools

3. Waits up to 240 seconds for all responses (fast group: 180s, slow group: 240s)

4. Synthesizes everything into one plain-English verdict: **LOOKS GOOD**, **CONCERNS**, or **BLOCKER**

---

## Prerequisites

You need 3 CLI tools and 4 API keys. Work through each section below.

---

## Step 1: Install Claude Code

If you don't have it yet:

```bash

npm install -g @anthropic-ai/claude-code

```

Verify:

```bash

claude --version

```

Opus 4.7 runs as one of the reviewers using your existing Claude Code authentication -- no extra API key needed.

---

## Step 2: Install Codex CLI and the OpenAI Codex plugin

Codex runs GPT-5.3-Codex (coding-optimized model, `--effort medium`) as one of the reviewers. `/ask` invokes Codex through the official **OpenAI Codex Claude Code plugin**, which wraps the Codex CLI in a Node.js companion runtime. The plugin solves an intermittent crash where direct `codex exec` calls in backgrounded subshells fail with `Error: No such file or directory (os error 2)` before Codex can even start.

You need both pieces: the CLI binary and the plugin wrapper.

**A. Install the Codex CLI**

```bash

npm install -g @openai/codex

```

Verify:

```bash

codex --version

```

You need an **OpenAI API key**. Get one at https://platform.openai.com/api-keys

Add to your shell profile (`~/.zshrc` or `~/.bashrc`):

```bash

export OPENAI_API_KEY="sk-..."

```

**B. Install the OpenAI Codex plugin in Claude Code**

Open Claude Code and run:

```

/plugin marketplace add openai-codex

/plugin install codex@openai-codex

```

Verify the plugin's companion script is present:

```bash

ls ~/.claude/plugins/marketplaces/openai-codex/plugins/codex/scripts/codex-companion.mjs

```

If the file exists, you're done. The plugin path is version-stable -- no separate config file is needed. Model and reasoning effort are passed as flags inside the `/ask` skill, so you do **not** need to create a Codex profile in `~/.codex/config.toml`.

---

## Step 3: Install grok-cli (Grok reviewer)

Grok runs via a local proxy on port 3098. Install globally:

```bash

npm install -g grok-cli

```

Verify it installed:

```bash

ls $(npm root -g)/grok-cli/proxy.js

```

You need an **xAI API key**. Get one at https://console.x.ai

Add to your shell profile:

```bash

export XAI_API_KEY="xai-..."

```

---

## Step 4: Install OpenCode CLI (Gemini and Kimi)

OpenCode provides agent tools (Read, Glob, Grep) to models that don't run natively in Claude Code.

```bash

npm install -g opencode-ai

```

Verify:

```bash

opencode --version

```

Minimum required version: **1.14.31**

You need two API keys for the OpenCode-based reviewers:

**Google AI** (for Gemini) -- get key at https://aistudio.google.com/apikey

```bash

export GOOGLE_GENERATIVE_AI_API_KEY="AIza..."

```

**OpenRouter** (for Kimi K2-0905) -- get key at https://openrouter.ai/keys

```bash

export OPENROUTER_API_KEY="sk-or-..."

```

---

## Step 5: Get a DeepSeek API key

DeepSeek V4-Flash runs natively (not via OpenRouter) through OpenCode.

Get your key at https://platform.deepseek.com/api_keys

Add to your shell profile:

```bash

export DEEPSEEK_API_KEY="sk-..."

```

Reload your shell:

```bash

source ~/.zshrc # or source ~/.bashrc

```

---

## Step 6: Install the /ask skill

Create the commands directory if it doesn't exist:

```bash

mkdir -p ~/.claude/commands

```

Create the file `~/.claude/commands/ask.md` and paste the full skill content from the section at the bottom of this document.

---

## Step 7: Verify everything works

Run this checklist:

```bash

# 1. Codex CLI

codex --version

# 2. Codex plugin companion script exists

ls ~/.claude/plugins/marketplaces/openai-codex/plugins/codex/scripts/codex-companion.mjs

# 3. grok-cli proxy file exists

ls $(npm root -g)/grok-cli/proxy.js

# 4. opencode (must be >= 1.14.31)

opencode --version

# 5. All API keys are set

echo "OPENAI: ${OPENAI_API_KEY:0:8}..."

echo "XAI: ${XAI_API_KEY:0:8}..."

echo "DEEPSEEK: ${DEEPSEEK_API_KEY:0:8}..."

echo "GOOGLE: ${GOOGLE_GENERATIVE_AI_API_KEY:0:8}..."

echo "OPENROUTER:${OPENROUTER_API_KEY:0:8}..."

```

If any key shows blank, re-check your shell profile and reload it.

---

## Step 8: Test it

Open Claude Code in any project directory and type:

```

/ask is this codebase well-structured?

```

You should see it launch all 6 models and return a synthesized verdict after ~30-240 seconds.

---

## Troubleshooting

| Problem | Fix |

|---------|-----|

| Grok always fails | Check `XAI_API_KEY` is set and `grok-cli` is installed globally via `npm install -g grok-cli` |

| Codex result is empty / `Cannot find module` | Plugin is missing. Run `/plugin install codex@openai-codex` inside Claude Code, then verify `ls ~/.claude/plugins/marketplaces/openai-codex/plugins/codex/scripts/codex-companion.mjs` |

| Codex result is empty / `os error 2` (rare) | Plugin path moved or partially installed. Reinstall via `/plugin` |

| Codex times out | Confirm the Codex CLI itself works: `codex --version`. The plugin uses it under the hood |

| DeepSeek times out | Ensure `DEEPSEEK_API_KEY` is set -- DeepSeek now runs natively, not via OpenRouter |

| Opus fails with auth error | Make sure you are logged in to Claude Code: run `claude` and complete auth |

| OpenCode not found | `npm install -g opencode-ai` then restart terminal |

| Gemini fails with auth error | Must be `GOOGLE_GENERATIVE_AI_API_KEY`, not `GEMINI_API_KEY` |

| Kimi times out | Check `OPENROUTER_API_KEY` is set; do not use kimi-k2.6 or kimi-k2.5 (they hang) |

If a model fails, `/ask` continues with the rest and notes which ones timed out.

---

## The skill file

Paste the contents below into `~/.claude/commands/ask.md`:

---

```markdown

---

description: Pre-implementation check via Codex (OpenAI), Opus 4.7 (Anthropic) with hands-on tools and xhigh thinking, Grok (xAI), DeepSeek V4-Flash, Gemini (Google), and Kimi (Moonshot) in parallel. Six independent models review your code for correctness, risks, and simpler alternatives. Use when: about to implement, need a second opinion, or want to verify a fix.

allowed-tools: Bash, Read, Write, Glob, Grep, Agent

argument-hint: [describe the problem, or leave blank to escalate current issue]

---

# /ask -- Six-Model Pre-Implementation Check (Token-Optimized)

## Step 0: Resolve topic and session

**Topic resolution (do this first):**

```

1. $ARGUMENTS has a substantive question?

-> Use it as the topic.

2. $ARGUMENTS is empty or vague ("this", "same", "^", etc.)?

-> Default question: "Confirm that the current implementation is robust and has no regression risk."

-> Use the most recent code change or discussion in the conversation as context.

3. Neither has a clear question?

-> Escalate the problem currently being worked on in the conversation, framed as a robustness + regression check.

```

```bash

SESSION_ID=$(openssl rand -hex 4)

# Temp file: /tmp/codex_result_${SESSION_ID}.txt

```

## Step 1: Detect environment and start Grok proxy

Codex model: `gpt-5.3-codex`, effort: `medium`.

Run once before calling any model.

```bash

GIT_TOPLEVEL=$(git rev-parse --show-toplevel 2>/dev/null)

if [ -n "$GIT_TOPLEVEL" ]; then

WORK_DIR="$GIT_TOPLEVEL"

CODEX_GIT_FLAGS="-C $WORK_DIR"

else

WORK_DIR=$(pwd)

CODEX_GIT_FLAGS="--skip-git-repo-check"

fi

# WORK_DIR always resolves to the local directory on disk -- no push required.

# Inside a project repo (e.g. ~/dev/myproject/): WORK_DIR = git root, codex uses -C.

# Outside a repo (e.g. invoked from ~/dev/): WORK_DIR = cwd, codex uses --skip-git-repo-check

# (Codex errors out if you combine -C with --skip-git-repo-check on a non-git dir --

# its native cwd handling kicks in and lands in the right place).

# opencode uses --dir $OPENCODE_DIR (computed in Step 3g from START_HERE_PARENT, falls back to WORK_DIR).

```

These results determine which flags to use for Codex and opencode:

- Codex: use `$CODEX_GIT_FLAGS` (either `-C $WORK_DIR` or `-C $WORK_DIR --skip-git-repo-check`)

- opencode: use `--dir $OPENCODE_DIR` (set in Step 3g; equals `START_HERE_PARENT` when set, else `$WORK_DIR`)

### Start Grok proxy

First kill any leftover proxy from a previous run, then start a fresh one. Run this as a single Bash command (not `run_in_background`) -- it takes ~2 seconds and must complete before Step 3.

**Three guardrails** (all three must pass to emit `GROK_PROXY_OK`):

1. `XAI_API_KEY` must be set -- a port-bound proxy with an empty key still returns 401 at request time.

2. `grok-cli` must be found via `npm root -g` -- avoids a hardcoded path. (`require.resolve` is not used because it does not search global node_modules.)

3. A smoke request must return HTTP 200 -- port binding is not proof of working auth.

```bash

# Only kill a previous grok-cli proxy -- do NOT blanket-kill anything on port 3098

pkill -f "grok-cli/proxy\.js" 2>/dev/null; sleep 0.5

if [ -z "$XAI_API_KEY" ]; then

echo "GROK_PROXY_FAILED (XAI_API_KEY not set)"

else

GROK_PROXY_JS="$(npm root -g 2>/dev/null)/grok-cli/proxy.js"

if [ ! -f "$GROK_PROXY_JS" ]; then

echo "GROK_PROXY_FAILED (grok-cli not installed)"

else

node -e "

const { start } = require('$GROK_PROXY_JS');

start(3098, { key: process.env.XAI_API_KEY, baseUrl: 'https://api.x.ai' })

.then(() => console.log('GROK_PROXY_READY'))

.catch(err => { console.error(err); process.exit(1); });

" &

# Poll for port readiness instead of fixed sleep (max 3s at 200ms intervals)

for i in $(seq 1 15); do

nc -z localhost 3098 2>/dev/null && break

sleep 0.2

done

SMOKE=$(curl -s --max-time 8 -o /dev/null -w "%{http_code}" -X POST \

http://localhost:3098/v1/messages \

-H "Content-Type: application/json" \

-H "x-api-key: sk-ant-PLACEHOLDER" \

-H "anthropic-version: 2023-06-01" \

-d '{"model":"grok-4-1-fast-reasoning","max_tokens":1,"messages":[{"role":"user","content":"hi"}]}' \

2>/dev/null)

case "$SMOKE" in

200) echo "GROK_PROXY_OK" ;;

401|403) echo "GROK_PROXY_FAILED (auth)" ;;

*) echo "GROK_PROXY_FAILED (http=$SMOKE)" ;;

esac

fi

fi

```

If the output contains `GROK_PROXY_FAILED`, skip Grok entirely per Rule 10 and proceed with the remaining models.

## Step 2: Build Claude's analysis block and START HERE list

Before calling the reviewers, prepare two things:

**1. Analysis block** -- based on your current understanding. Goes into every prompt so each model can confirm or challenge your thinking.

```

<claudes_analysis>

HYPOTHESIS: [your current best guess -- or "None -- requesting independent analysis" for cold calls]

ALREADY CHECKED: [files read, tests run, logs examined -- or "Nothing yet"]

CONCERNS: [things you're unsure about or that don't add up -- or "None"]

QUESTIONS: [specific things you want confirmed, challenged, or investigated -- or "General review"]

</claudes_analysis>

```

A **cold call** is when /ask is invoked with no prior investigation in the conversation (e.g., user types `/ask is this approach sound?` with no preceding analysis). For cold calls, omit the `<claudes_analysis>` block entirely from prompts -- it adds no value and wastes tokens.

**2. START HERE block** -- a short list of file paths and line numbers you have identified as relevant to the question, derived from the current question and conversation context. Read or grep the codebase as needed to find exact paths before writing this block. Example:

```

START HERE (files Claude identified as relevant):

- ~/dev/myproject/pipeline/cache.py:142 -- the cache lookup function in question

- ~/dev/myproject/pipeline/intake.py:88 -- the caller that invokes it

- ~/dev/myproject/config/settings.py -- env vars that control cache behavior

```

For cold calls with no identified files, write: `None identified -- explore freely from the question context.`

**Also compute `START_HERE_PARENT`** -- a single absolute directory path that contains every START HERE file. Step 3g uses this to set `--dir` for opencode (DeepSeek/Gemini/Kimi) so their unscoped `Grep` calls actually find the target files. Pick using these rules:

- All START HERE files inside `~/dev/<project>/` → `$HOME/dev/<project>` (the project root, same as `$WORK_DIR`).

- All START HERE files inside `~/.claude/` → `$HOME/.claude`.

- Mixed locations → use the deepest absolute path that is an ancestor of all START HERE files.

- Cold call (no START HERE files) → use `$WORK_DIR`.

**How to propagate the value into Step 3g:** Bash tool calls do NOT share environment between invocations. Each `Bash` call is a fresh shell. You MUST substitute the literal value into the Step 3g background script the same way you substitute `SESSION_ID` -- as a literal string at the top of the script, e.g., `START_HERE_PARENT="$HOME/.claude"`. Do not write `START_HERE_PARENT="$START_HERE_PARENT"` -- the outer shell variable does not exist in the background shell.

Why this matters: opencode's Grep tool scopes to `--dir` and silently fails when target files are outside that scope. With `--dir $HOME/dev`, `Grep "PATTERN"` returns 0 matches on files inside `~/.claude/`; with `--dir ~/.claude`, it returns the correct match. This fixes tool-budget exhaustion from futile grep calls.

**No Haiku context summarizer step.** All six reviewers have hands-on Read/Grep/Glob tools and read files from disk themselves. No pre-summarized inline context is generated.

## Step 3: Call Codex, Opus, Grok, DeepSeek, Gemini, and Kimi (in parallel)

Write all six prompt files first (synchronous -- Steps 3a-3f). Then launch all six reviewers inside **one single background bash script** (Step 3g). This fires exactly one harness notification when everything finishes, instead of six separate notifications.

All six models share the full review prompt below and read files from disk via their own tools. All six return the same structured output format.

### 3a: Codex prompt

Write the shared review prompt to a temp file. Passing the prompt directly on the command line risks shell expansion of backticks, `$VAR`, and embedded quotes from the user's question.

```bash

cat > /tmp/codex_prompt_${SESSION_ID}.txt << 'PROMPT_EOF'

[Full review prompt from "Shared full review prompt" in 3c, with the following block

appended immediately before REVIEW CHECKLIST:]

TURN BUDGET: Aim for ~20 tool calls or fewer. Once you have enough evidence to commit

to a verdict, stop reading and emit it -- don't keep exploring.

PROMPT_EOF

```

### 3b: Opus prompt

Opus runs as a CLI call, NOT as an Agent subagent. This avoids the overhead of spawning a full subagent conversation.

Opus reads files from disk via Read/Grep/Glob -- same as the other five reviewers. The prompt template is the shared review prompt from 3c.

```bash

cat > /tmp/opus_prompt_${SESSION_ID}.txt << 'PROMPT_EOF'

[Full review prompt from "Shared full review prompt" in 3c, with the following block

appended immediately before REVIEW CHECKLIST:]

TURN BUDGET (HARD STOP): You have a maximum of 7 tool calls total. After your 7th tool

call, stop reading immediately and emit VERDICT based on what you have. Do not open

another file.

PROMPT_EOF

```

**Why NOT `--bare`:** Your auth is likely OAuth (Claude Max subscription, token in macOS keychain). The `--bare` flag explicitly blocks keychain reads and requires `ANTHROPIC_API_KEY` or `apiKeyHelper`. Without `--bare`, the CLI reads the keychain OAuth token and auth works. The extra tokens from settings loading are trivial vs. losing Opus entirely.

**Why `--disable-slash-commands` and `--setting-sources ""`:** Partial alternative to `--bare` -- skips skill resolution and settings loading, trimming overhead while keeping keychain auth intact.

**Why `--max-turns 8`:** Opus has hands-on tools and will iterate. Prompt instructs it to stop at 7 tool calls; CLI hard-kills at 8. Two-layer enforcement prevents the failure mode where Opus consumes all turns reading docs and emits zero VERDICT output.

**Why `MAX_THINKING_TOKENS=32000`:** Forces xhigh extended thinking budget for Opus 4.7. Higher thinking budget = deeper analysis on review-sized prompts.

**Why `--allowedTools "Read Grep Glob"`:** Read-only file investigation. No Edit/Write/Bash.

### 3c: Grok prompt

Write the Grok prompt to a temp file. Grok reads files from disk via its own tools:

```bash

cat > /tmp/grok_prompt_${SESSION_ID}.txt << 'PROMPT_EOF'

[Full review prompt from "Shared full review prompt" below]

PROMPT_EOF

```

**Shared full review prompt (used by all six models):**

All six models read files from disk via their own tools, so they share the full review prompt. The `[MISSION]` placeholder must be replaced with exactly one of the two mission texts below before writing prompt files -- never include both, and never include the label lines in model-facing prompts.

**Mission text -- pick one:**

Non-cold-call (when `<claudes_analysis>` will be present in the prompt):

> Claude already analyzed this and formed a hypothesis (see `<claudes_analysis>` below). Your job is NOT to confirm Claude's analysis -- find what Claude got wrong or missed entirely. Treat the hypothesis as a starting point to challenge, not a conclusion to rubber-stamp.

Cold-call (when no `<claudes_analysis>` block exists):

> No prior hypothesis exists. Investigate the question from scratch and report findings adversarially -- assume nothing is safe until you've verified it.

```

You are a senior software engineer doing a pre-ship code review. Be direct, specific, and candid.

QUESTION: [QUESTION]

YOUR MISSION:

[MISSION]

START HERE (files Claude identified as relevant):

[CLAUDE_START_HERE]

TOOLS AVAILABLE -- use them actively:

- Read: Open any file at a specific path and line. Start with START HERE files.

- Grep: Search the full codebase by pattern. Use when you suspect the problem touches

callers, imports, configs, or anything not listed above.

- Glob: List files matching a path pattern. Use to discover related files.

Every claim you make must cite something you actually read.

EXPLORATION RULES:

- Read START HERE files first to orient yourself.

- If those files look clean, use Grep to check callers, related imports, and config before

concluding LOOKS GOOD. Do not stop at the first clean file.

- If your instincts say the real problem is elsewhere, go look -- the full filesystem is accessible.

- Don't explore endlessly, but don't let speed pressure stop you from checking one level deeper

when something feels off.

[CLAUDES_ANALYSIS]

REVIEW CHECKLIST:

1. Logic correctness 2. Regressions 3. Contract mismatches 4. Data handling 5. Deployment readiness

Respond with EXACTLY this format (max 40 lines total):

VERDICT: [LOOKS GOOD or CONCERNS or BLOCKER]

CONFIDENCE: [HIGH or MEDIUM or LOW]

REASONING: [2-4 sentences with specific file/function references.]

MUST_FIX_FIRST: [most critical issue, or "Nothing -- safe to proceed"]

FLAGS:

- [HIGH/MED/LOW] [file:line or decision] -- [concern]

[or "None"]

SIMPLER_ALTERNATIVE: [one sentence, or "None"]

ROOT_CAUSE: [one sentence if debugging, or "N/A"]

RECOMMENDED_FIX: [one line per file: "file:location -- change", or "N/A"]

```

**Why `--bare` (used in Step 3g for Grok):** Skips hooks, CLAUDE.md loading, and auto-memory -- Grok is a one-shot reviewer, not a collaborator. This avoids injecting your personal Claude instructions into a third-party model.

**Why `--dangerously-skip-permissions`:** The proxy session is ephemeral and read-only (Read/Glob/Grep only). No edit or write tools are granted.

### 3d: DeepSeek prompt

Write the shared full review prompt (from 3c above) to a temp file:

```bash

cat > /tmp/deepseek_prompt_${SESSION_ID}.txt << 'PROMPT_EOF'

[Full review prompt from "Shared full review prompt" in 3c, with the following block

appended immediately before REVIEW CHECKLIST:]

FILE READING RULES: Never read more than 200 lines of any single file in one call.

Use Grep to find the relevant offset first, then read that section only.

PROMPT_EOF

```

**Why `deepseek/deepseek-v4-flash` (NATIVE, NOT OpenRouter):** The OpenRouter route (`openrouter/deepseek/deepseek-v4-flash`) hangs reliably. The native provider path uses `DEEPSEEK_API_KEY` directly, bypasses OpenRouter, and works cleanly with `--variant minimal` to disable thinking mode.

**Why `--variant minimal`:** Disables thinking mode for V4-Flash. Without this flag, V4-Flash allocates all output tokens to internal reasoning and never returns a structured response.

### 3e: Gemini prompt

Write the shared full review prompt (from 3c above) to a temp file:

```bash

cat > /tmp/gemini_prompt_${SESSION_ID}.txt << 'PROMPT_EOF'

[Full review prompt from "Shared full review prompt" in 3c, with the following block

appended immediately before REVIEW CHECKLIST:]

TURN BUDGET (HARD STOP): You have a maximum of 12 tool calls total. After your 12th tool

call, stop reading immediately and emit VERDICT based on what you have. Do not open

another file.

PROMPT_EOF

```

**Why TURN BUDGET:** Gemini can exhaust its thinking-token budget chasing files that don't exist, then exit with 0 bytes of stdout. opencode has no `--max-turns` flag, so the prompt budget is the only enforcement.

**Why `gemini-3-flash-preview`:** Fast, cheap (~$0.02/run), 1M context window, thinking enabled by default. If stability issues arise, swap to `gemini-2.5-flash`.

### 3f: Kimi prompt

Write the shared full review prompt (from 3c above) to a temp file:

```bash

cat > /tmp/kimi_prompt_${SESSION_ID}.txt << 'PROMPT_EOF'

[Full review prompt from "Shared full review prompt" in 3c, with the following block

appended immediately before REVIEW CHECKLIST:]

FILE READING RULES: Read each file at most ONCE. Use Grep to find the relevant offset

first, then read that section in one call. Do not page through a file in small chunks.

TURN BUDGET (HARD STOP): You have a maximum of 15 tool calls total. After your 15th tool

call, stop reading immediately and emit VERDICT based on what you have. Do not open

another file.

PROMPT_EOF

```

**Why `openrouter/moonshotai/kimi-k2-0905`:** K2.6 has an uncapped thinking phase that causes every run to hang. K2-0905 is `reasoning=False` with output capped at 16384 tokens -- no thinking phase, completes reliably. Do not use K2.6 or K2.5 (both have `reasoning=True` and an uncapped thinking budget).

### 3g: Launch all six reviewers in a single background job

After all prompt files are written (Steps 3a-3f), launch all six models inside **one bash command** with `run_in_background: true`. Per-group timeouts (180s fast, 240s slow) are enforced inside the script via two background killer processes. An early-exit watcher fires once Codex + Opus + any 2 fast models finish successfully.

```bash

ASK_START=$(date +%s)

SID="${SESSION_ID}"

# START_HERE_PARENT must be substituted as a LITERAL value per Step 2 rules.

# Bash tool calls do not share environment, so this MUST be a literal string.

# Replace [START_HERE_PARENT_VALUE] with one of:

# "$HOME/.claude" when reviewing files in ~/.claude/

# "$HOME/dev/<project>" when reviewing files in a specific project tree

# "" cold call (no START HERE files) -- fallback picks WORK_DIR

START_HERE_PARENT="[START_HERE_PARENT_VALUE]"

# Recompute WORK_DIR inside this script. Each Bash call is an independent shell.

WORK_DIR=$(git rev-parse --show-toplevel 2>/dev/null)

[ -z "$WORK_DIR" ] && WORK_DIR=$(pwd)

# OPENCODE_DIR: the directory passed to `opencode run --dir` for DeepSeek, Gemini, Kimi.

# Must contain the START HERE files so unscoped Grep can find them.

OPENCODE_DIR="${START_HERE_PARENT:-$WORK_DIR}"

# Codex runs through the official OpenAI Codex plugin's companion runtime.

CODEX_COMPANION=~/.claude/plugins/marketplaces/openai-codex/plugins/codex/scripts/codex-companion.mjs

# Launch all six in parallel -- each subshell records "elapsed:exitcode" to a timing file

( T0=$(date +%s)

node "$CODEX_COMPANION" task \

--cwd "$WORK_DIR" \

--model gpt-5.3-codex \

--effort medium \

--prompt-file /tmp/codex_prompt_${SID}.txt \

> /tmp/codex_result_${SID}.txt 2>/tmp/codex_stderr_${SID}.txt

echo "$(($(date +%s)-T0)):$?" > /tmp/codex_timing_${SID}.txt

) &

P1=$!

( T0=$(date +%s)

cat /tmp/opus_prompt_${SID}.txt | \

MAX_THINKING_TOKENS=32000 \

claude -p \

--model claude-opus-4-7 \

--max-turns 8 \

--no-session-persistence \

--dangerously-skip-permissions \

--disable-slash-commands \

--setting-sources "" \

--allowedTools "Read Grep Glob" \

> /tmp/opus_result_${SID}.txt 2>/tmp/opus_stderr_${SID}.txt

echo "$(($(date +%s)-T0)):$?" > /tmp/opus_timing_${SID}.txt

) &

P2=$!

( T0=$(date +%s)

cat /tmp/grok_prompt_${SID}.txt | \

ANTHROPIC_BASE_URL=http://localhost:3098 \

ANTHROPIC_API_KEY=sk-ant-PLACEHOLDER \

claude -p --bare \

--model grok-4-1-fast-reasoning \

--max-turns 10 \

--no-session-persistence \

--dangerously-skip-permissions \

--allowedTools "Read Grep Glob" \

> /tmp/grok_result_${SID}.txt 2>/tmp/grok_stderr_${SID}.txt

echo "$(($(date +%s)-T0)):$?" > /tmp/grok_timing_${SID}.txt

) &

P3=$!

( T0=$(date +%s)

cat /tmp/deepseek_prompt_${SID}.txt | \

opencode run \

-m deepseek/deepseek-v4-flash \

--variant minimal \

--agent review \

--dir $OPENCODE_DIR \

> /tmp/deepseek_result_${SID}.txt 2>/tmp/deepseek_stderr_${SID}.txt

DS_EXIT=$?

[ ! -s /tmp/deepseek_result_${SID}.txt ] && DS_EXIT=1

echo "$(($(date +%s)-T0)):$DS_EXIT" > /tmp/deepseek_timing_${SID}.txt

) &

P4=$!

( T0=$(date +%s)

cat /tmp/gemini_prompt_${SID}.txt | \

opencode run \

-m google/gemini-3-flash-preview \

--agent review \

--dir $OPENCODE_DIR \

> /tmp/gemini_result_${SID}.txt 2>/tmp/gemini_stderr_${SID}.txt

echo "$(($(date +%s)-T0)):$?" > /tmp/gemini_timing_${SID}.txt

) &

P5=$!

( T0=$(date +%s)

cat /tmp/kimi_prompt_${SID}.txt | \

opencode run \

-m openrouter/moonshotai/kimi-k2-0905 \

--agent review \

--dir $OPENCODE_DIR \

> /tmp/kimi_result_${SID}.txt 2>/tmp/kimi_stderr_${SID}.txt

echo "$(($(date +%s)-T0)):$?" > /tmp/kimi_timing_${SID}.txt

) &

P6=$!

# Per-group killers: Codex + Opus run on the 240s "slow group" budget,

# the 4 third-party models run on the 180s "fast group" budget.

( sleep 180 && kill $P3 $P4 $P5 $P6 2>/dev/null ) &

FAST_KILLER=$!

( sleep 240 && kill $P1 $P2 2>/dev/null ) &

SLOW_KILLER=$!

# Watcher: exit as soon as Codex + Opus + any 2 of the 4 fast models have completed

# successfully (exit code 0). Only successful completions count toward the threshold.

( while true; do

sleep 5

codex_done=0; opus_done=0; fast_done=0

codex_t=$(cat "/tmp/codex_timing_${SID}.txt" 2>/dev/null)

[ "$(echo "$codex_t" | cut -d: -f2)" = "0" ] && codex_done=1

opus_t=$(cat "/tmp/opus_timing_${SID}.txt" 2>/dev/null)

[ "$(echo "$opus_t" | cut -d: -f2)" = "0" ] && opus_done=1

for m in grok deepseek gemini kimi; do

t=$(cat "/tmp/${m}_timing_${SID}.txt" 2>/dev/null)

[ "$(echo "$t" | cut -d: -f2)" = "0" ] && fast_done=$((fast_done+1))

done

if [ "$codex_done" = "1" ] && [ "$opus_done" = "1" ] && [ "$fast_done" -ge 2 ]; then

kill $P1 $P2 $P3 $P4 $P5 $P6 2>/dev/null

echo "EARLY_EXIT codex+opus+2fast_done"

exit 0

fi

done ) &

WATCHER=$!

# Wait for all six (or until killed by timeout or watcher)

wait $P1 $P2 $P3 $P4 $P5 $P6

# Cancel watcher and killers if models finished early

kill $WATCHER $FAST_KILLER $SLOW_KILLER 2>/dev/null

echo "ALL_DONE elapsed=$(( $(date +%s) - ASK_START ))s"

```

Run with `timeout 250000`. Use `run_in_background: true`.

**If Grok proxy failed (Step 1):** Omit the entire `( T0=... grok ... ) & P3=$!` subshell block. Update the FAST_KILLER line to `( sleep 180 && kill $P4 $P5 $P6 2>/dev/null )` (slow killer unchanged) AND call `wait $P1 $P2 $P4 $P5 $P6` instead.

### Waiting for results

**Wait silently.** One harness notification fires when everything is done -- proceed immediately to Step 4. Emit zero text while waiting.

## Step 4: Collect and present results (TOKEN-OPTIMIZED)

**Collect all results in a single bash command:**

```bash

SID="${SESSION_ID}"

for model in codex opus grok deepseek gemini kimi; do

echo "=== ${model} result ==="

f="/tmp/${model}_result_${SID}.txt"

if [ ! -s "$f" ]; then

echo "(empty)"

else

out=$(head -100 "$f")

if echo "$out" | grep -q "^VERDICT:"; then

echo "$out"

else

# VERDICT not in first 100 lines -- fall back to 150 (hard ceiling)

head -150 "$f"

fi

fi

echo "=== ${model} timing ==="

cat "/tmp/${model}_timing_${SID}.txt" 2>/dev/null || echo "n/a"

# VERDICT presence is the source of truth -- not the timing/exit code.

# A subshell killed by the wall timer leaves the timing file missing even when

# the result file already contains a complete VERDICT.

has_verdict=$(head -150 "$f" 2>/dev/null | grep -c "^VERDICT:" || echo 0)

size=$(stat -f%z "$f" 2>/dev/null || stat -c%s "$f" 2>/dev/null || echo 0)

if [ "$has_verdict" = "0" ] || [ "$size" = "0" ]; then

echo "=== ${model} stderr ==="

head -15 "/tmp/${model}_stderr_${SID}.txt" 2>/dev/null || echo "(none)"

echo "=== ${model} result tail ==="

tail -10 "$f" 2>/dev/null || echo "(none)"

fi

done

```

### Auto-diagnosis -- match against stderr/result content, first match wins:

| Pattern | Diagnosis | Fix hint |

|---------|-----------|----------|

| `No such file or directory` or `os error 2` | ENOENT inside plugin runtime | Verify codex-companion.mjs exists; reinstall the codex plugin if missing |

| `Cannot find module` | Plugin path moved or uninstalled | `ls ~/.claude/plugins/marketplaces/openai-codex/` -- reinstall if empty |

| Result 0 bytes AND exit 0 | Plugin couldn't reach codex CLI | Run `which codex` and `codex --version` |

| Exit code 143 | Timeout: killed by group watchdog (180s fast / 240s slow) | Check what the model was doing in its result file |

| `authentication` or `API key` or `401` or `403` | Auth failure | Check the API key env var for this model |

| `connection refused` | Proxy/network unreachable | Grok: proxy didn't start; others: API down |

| `reasoning_content` or `thinking` | Thinking-mode conflict | Model stuck in thinking -- try `--variant minimal` |

### How to synthesize

1. **Classify each model's verdict** as LOOKS GOOD, CONCERNS, or BLOCKER

2. **Merge findings across models** -- deduplicate issues that multiple models flagged

3. **Separate consensus issues from single-model flags** -- issues flagged by 2+ models go in "What needs fixing", issues from only 1 model go in "Watch out for"

4. **Translate technical findings to plain English** -- no file:line references in the main view

5. **Give a clear yes/no on whether to proceed**

6. **Compare each model's findings to your own pre-call analysis (Step 2)** -- for each model, determine if it found something genuinely new that you did not identify

### Output format

```

## /ask Result: [LOOKS GOOD / CONCERNS / BLOCKER] ([N] of 6 reviewers)

[One sentence: who agreed and who disagreed, in plain English]

### What needs fixing (agreed by 2+ models)

[Numbered list -- plain English. Or "Nothing -- all six say safe to proceed."]

### Watch out for (flagged by 1 model)

[Bulleted list -- note which model flagged it. Or "Nothing additional."]

### Simpler alternative?

[If any model suggested a simpler approach. Or "None suggested."]

### Safe to proceed?

[Yes/No -- and if No, what to fix first. One sentence.]

<details>

<summary>Model verdicts (truncated)</summary>

### Codex (GPT-5.3-Codex)

[Verdict + reasoning + flags only]

### Opus 4.7 (Claude)

[Verdict + reasoning + flags only]

### Grok (xAI)

[Verdict + reasoning + flags only]

### DeepSeek V4-Flash

[Verdict + reasoning + flags only]

### Gemini (Google)

[Verdict + reasoning + flags only]

### Kimi K2-0905 (Moonshot)

[Verdict + reasoning + flags only]

</details>

### 🔎 Did Other Models Catch Something I Missed?

[For each model, show one line:]

🔴 **[Model] found a critical issue I missed** -- [plain English, one sentence]

🟡 **[Model] raised a valid point I missed** -- [plain English, one sentence]

✅ **[Model]** -- confirmed my analysis, nothing new

### Proposed Next Step

[CONDITIONAL -- only when verdict is CONCERNS or BLOCKER. Skip for LOOKS GOOD.]

**What needs to change:**

[2-3 sentences plain English]

**Steps:**

[Numbered list, 3-5 steps]

**What stays untouched:**

[1-2 bullet points]

Want me to implement this?

⏱ [Model] [Xs] | [Model] [Xs] | [Model] [timed out] | ...

<details>

<summary>🔧 Debug log -- [all 6 ok | FAILED: Model, Model]</summary>

| Model | Status | Exit | Time | Diagnosis |

|-------|--------|------|------|-----------|

| Codex | ✅ ok | 0 | 45s | -- |

| Opus | ✅ ok | 0 | 52s | -- |

| Grok | ✅ ok | 0 | 32s | -- |

| DeepSeek | ✅ ok | 0 | 28s | -- |

| Gemini | ✅ ok | 0 | 41s | -- |

| Kimi | ✅ ok | 0 | 55s | -- |

Log saved → ~/.claude/logs/ask/[SID]/

</details>

```

### Edge cases

- **All six respond before their group walls:** Proceed immediately with all six.

- **Fast models still running at 180s / slow models at 240s:** Killer fires, note timed-out models in the timing line.

- **One reviewer failed:** Note it at the top: "[Model] timed out -- showing [N] of 6 results."

- **Two or more failed:** "Only [N] of 6 reviewers responded -- treat as limited consensus."

- **All six say LOOKS GOOD with no flags:** Short output -- just the header + "Safe to proceed? Yes." + collapsed details + timing.

## Step 4.5: Save debug logs

### 4.5a: Write meta.json synchronously

```bash

SID="${SESSION_ID}"

GIT_BRANCH=$(git -C "${WORK_DIR}" rev-parse --abbrev-ref HEAD 2>/dev/null || echo "n/a")

cat > /tmp/ask_meta_${SID}.json << EOF

{

"session_id": "${SID}",

"timestamp": "$(date -u +%Y-%m-%dT%H:%M:%SZ)",

"workdir": "${WORK_DIR}",

"git_branch": "${GIT_BRANCH}",

"question": "[first 120 chars of question]",

"elapsed_total_seconds": [N],

"models": {

"codex": {"verdict": "[LOOKS_GOOD|CONCERNS|BLOCKER|null]", "status": "[ok|empty|error|timeout]", "timing": "[from batch output]"},

"opus": {"verdict": "[...]", "status": "[...]", "timing": "[...]"},

"grok": {"verdict": "[...]", "status": "[...]", "timing": "[...]"},

"deepseek": {"verdict": "[...]", "status": "[...]", "timing": "[...]"},

"gemini": {"verdict": "[...]", "status": "[...]", "timing": "[...]"},

"kimi": {"verdict": "[...]", "status": "[...]", "timing": "[...]"}

},

"overall_verdict": "[LOOKS_GOOD|CONCERNS|BLOCKER]",

"reviewers_responded": [N]

}

EOF

```

### 4.5b: Background cleanup (run_in_background: true)

```bash

SID="${SESSION_ID}"

LOG_DIR=~/.claude/logs/ask/${SID}

mkdir -p "$LOG_DIR"

# Copy all prompt + result + stderr + timing files (full, not head-100)

for model in codex opus grok deepseek gemini kimi; do

for suffix in prompt result timing stderr; do

f="/tmp/${model}_${suffix}_${SID}.txt"

[ -f "$f" ] && cp "$f" "$LOG_DIR/${model}_${suffix}.txt"

done

done

# Move meta.json into log dir

[ -f "/tmp/ask_meta_${SID}.json" ] && mv "/tmp/ask_meta_${SID}.json" "$LOG_DIR/meta.json"

# Append summary line to rolling log

CODEX_T=$(cat "/tmp/codex_timing_${SID}.txt" 2>/dev/null || echo "n/a")

OPUS_T=$(cat "/tmp/opus_timing_${SID}.txt" 2>/dev/null || echo "n/a")

GROK_T=$(cat "/tmp/grok_timing_${SID}.txt" 2>/dev/null || echo "n/a")

DEEPSEEK_T=$(cat "/tmp/deepseek_timing_${SID}.txt" 2>/dev/null || echo "n/a")

GEMINI_T=$(cat "/tmp/gemini_timing_${SID}.txt" 2>/dev/null || echo "n/a")

KIMI_T=$(cat "/tmp/kimi_timing_${SID}.txt" 2>/dev/null || echo "n/a")

echo "$(date -u +%Y-%m-%dT%H:%M:%SZ) | ${SID} | ${WORK_DIR} | codex=(${CODEX_T}) opus=(${OPUS_T}) grok=(${GROK_T}) deepseek=(${DEEPSEEK_T}) gemini=(${GEMINI_T}) kimi=(${KIMI_T})" \

>> ~/.claude/logs/ask.log

# Cleanup temp files and Grok proxy

rm -f /tmp/codex_prompt_${SID}.txt /tmp/codex_result_${SID}.txt /tmp/codex_timing_${SID}.txt /tmp/codex_stderr_${SID}.txt \

/tmp/opus_prompt_${SID}.txt /tmp/opus_result_${SID}.txt /tmp/opus_timing_${SID}.txt /tmp/opus_stderr_${SID}.txt \

/tmp/grok_prompt_${SID}.txt /tmp/grok_result_${SID}.txt /tmp/grok_timing_${SID}.txt /tmp/grok_stderr_${SID}.txt \

/tmp/deepseek_prompt_${SID}.txt /tmp/deepseek_result_${SID}.txt /tmp/deepseek_timing_${SID}.txt /tmp/deepseek_stderr_${SID}.txt \

/tmp/gemini_prompt_${SID}.txt /tmp/gemini_result_${SID}.txt /tmp/gemini_timing_${SID}.txt /tmp/gemini_stderr_${SID}.txt \

/tmp/kimi_prompt_${SID}.txt /tmp/kimi_result_${SID}.txt /tmp/kimi_timing_${SID}.txt /tmp/kimi_stderr_${SID}.txt \

/tmp/ask_meta_${SID}.json 2>/dev/null

pkill -f "grok-cli/proxy\.js" 2>/dev/null

```

---

## Rules

1. Never auto-apply suggestions -- always present and wait for approval.

2. **No intermediate output.** Between launching models (Step 3) and presenting results (Step 4), emit zero text.

3. **Wait for Codex + Opus + any 2 of the 4 fast models.** Once that condition is met, the watcher kills remaining models. Codex AND Opus are mandatory (strongest reviewers); both run on a 240s "slow group" budget. The four third-party models run on a 180s "fast group" budget.

4. **Hard ceilings:** Fast group (Grok/DeepSeek/Gemini/Kimi) killed at 180s. Slow group (Codex/Opus) killed at 240s.

5. If one model times out or fails, present the remaining results and note the failure.

6. If any output conflicts with your own analysis, present all perspectives honestly.

7. If a model's output is empty or the result file does not exist, present the remaining results.

8. Temp file cleanup and Grok proxy shutdown are handled inside the Step 4.5b background job -- do NOT issue a separate cleanup command.

9. Never auto-fire /ask. The user invokes it manually.

10. If the Grok proxy fails to start, proceed with the remaining models and note Grok was unavailable.

**Technical notes:**

- Codex runs through the official OpenAI Codex plugin's companion runtime: `node ~/.claude/plugins/marketplaces/openai-codex/plugins/codex/scripts/codex-companion.mjs task --cwd "$WORK_DIR" --model gpt-5.3-codex --effort medium --prompt-file <prompt>`. Direct `codex exec` in backgrounded subshells was replaced because it failed intermittently with `Error: No such file or directory (os error 2)` before Codex could print its startup banner. The plugin wraps Codex in proper Node.js process management. No Codex profile in `~/.codex/config.toml` is required -- model and effort are passed explicitly. `--write` is omitted to keep Codex read-only.

- Opus 4.7 runs with `MAX_THINKING_TOKENS=32000` (xhigh extended thinking) and `--max-turns 8`. Uses Claude Code's existing OAuth auth -- no separate API key needed. Do NOT use `--bare` flag (blocks keychain auth for OAuth users).

- Grok proxy runs on port 3098 via grok-cli. Requires `XAI_API_KEY`.

- DeepSeek uses `deepseek/deepseek-v4-flash` (NATIVE, not OpenRouter) with `--variant minimal`. Requires `DEEPSEEK_API_KEY`. The `openrouter/deepseek/deepseek-v4-flash` route hangs reliably -- do not use it.

- Gemini uses `google/gemini-3-flash-preview` via OpenCode. Requires `GOOGLE_GENERATIVE_AI_API_KEY` (not `GEMINI_API_KEY`). If unstable, swap to `gemini-2.5-flash`.

- Kimi uses `openrouter/moonshotai/kimi-k2-0905` via OpenCode. Requires `OPENROUTER_API_KEY`. Do NOT use kimi-k2.6 or kimi-k2.5 -- both have an uncapped thinking phase that causes every run to hang.

- Qwen3 Coder was removed: it hallucinated file content even with hands-on tools active. Do not re-add.

- Requires opencode-ai >= 1.14.31 for the OpenRouter routing fix and Kimi tool-sanitization compatibility.

```

Teşekkürler